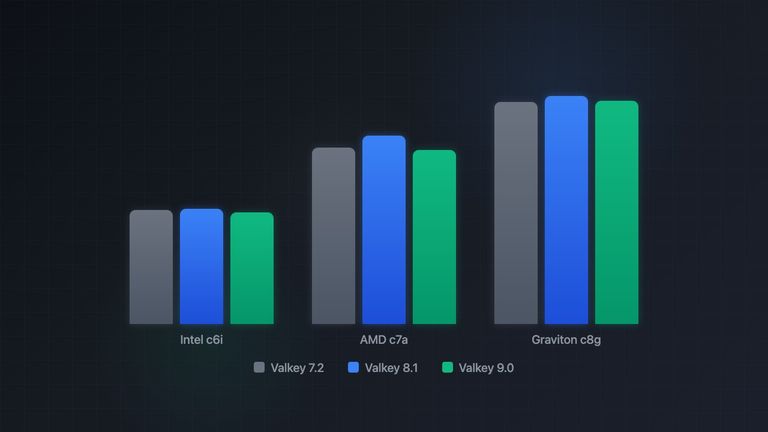

Valkey Performance: 7.2 vs 8.1 vs 9.0 on AWS

Valkey has been moving fast since forking from Redis in early 2024. Three major releases in two years, each promising performance improvements. But how much of that translates to real throughput gains when running an actual workload on top of it?

We ran BullMQ against Valkey 7.2, 8.1, and 9.0 on three different AWS instance families to find out. The goal was not just to measure raw server speed, but to understand how Valkey version choice interacts with hardware selection, and what combination gives you the most jobs per dollar.

Test Setup

All benchmarks ran on xlarge instances (4 vCPUs, 8 GB RAM) in us-east-1, each running

both Valkey and the BullMQ worker on the same machine to eliminate network variability:

| Instance | CPU | Architecture | On-Demand $/hr |

|---|---|---|---|

| c6i.xlarge | Intel Xeon 8375C (Ice Lake) | x86_64 | $0.170 |

| c7a.xlarge | AMD EPYC 9R14 (Genoa) | x86_64 | $0.153 |

| c8g.xlarge | AWS Graviton 4 | ARM64 | $0.136 |

Valkey ran in Docker with persistence disabled (--save "" --appendonly no) to eliminate

disk I/O as a variable and isolate the comparison to pure in-memory performance.

Each test ran 5 times and we report the mean. BullMQ v5.67, Node.js v24, Amazon Linux 2023.

The full benchmark code is at bullmq-valkey-bench.

Raw Valkey Speed: PING Latency

Before looking at BullMQ workloads, we measured raw Valkey round-trip latency with a simple PING command. This isolates the server and network stack from any application overhead.

The improvement here is significant and consistent across all hardware. Valkey 8.1 brought a step-change in raw latency, roughly 22-29% faster than 7.2, and 9.0 held those gains. Graviton posts the lowest absolute latency at 0.032 ms, followed closely by AMD at 0.045 ms. Intel trails at 0.069 ms, more than double the Graviton figure.

This tells us the Valkey engine itself got meaningfully faster. The question is whether that translates to real workload throughput.

Bulk Job Insertion

Adding 50,000 jobs at once via addBulk(), which pipelines Redis commands for maximum

throughput:

AMD leads at 39,312 j/s on Valkey 8.1, with Graviton close behind at 38,007 j/s. Intel caps at 29,204 j/s. Across versions, 8.1 consistently tops this test by 5-11% over 7.2, while 9.0 sits in between.

Bulk insertion is heavily pipelined, so the gains from lower per-command latency get partially hidden by the batching. Still, 8.1 manages to squeeze out a measurable improvement.

Single Job Insertion

A more realistic scenario: inserting 5,000 jobs individually with concurrent add() calls:

This test hits Valkey with individual Lua script executions, one per job. AMD leads at nearly 35K j/s, with Graviton around 29K and Intel at 18K. Valkey 8.1 shows a consistent 3-6% gain over 7.2, with 9.0 holding most of that improvement.

Processing Overhead

An important benchmark for queue users: how fast can BullMQ cycle through jobs when the jobs themselves do no work? This measures the pure overhead of the queue machinery, all the Lua scripts for dequeuing, locking, acknowledging, and cleaning up.

Concurrency = 1

At concurrency 1, everything is sequential: fetch a job, process it, acknowledge it, repeat. Graviton stands out here at over 10,000 j/s, roughly double the Intel figure. This is where ARM's low latency pays off the most, every microsecond saved on the round-trip directly multiplies throughput.

Version differences within each platform are small (2-5%), with 8.1 slightly ahead on AMD and Graviton.

Concurrency = 10

Scaling to 10 concurrent workers per process, AMD reaches 29,814 j/s on Valkey 8.1. Graviton sits at ~27K j/s across all versions, remarkably stable. Both are roughly double Intel's ~15K j/s.

An interesting detail: Intel actually sees a small gain on 9.0 at this concurrency (16,005 j/s vs 15,218 on 7.2), while AMD shows 8.1 as the clear leader.

Concurrency = 50

At high concurrency, AMD peaks at 34,207 j/s on Valkey 8.1, the highest throughput in the entire benchmark. But 9.0 drops to 30,483 j/s, an 11% regression from 8.1.

Graviton tells a completely different story: all three versions land within 1% of each other at ~28.3K j/s. This is the most stable platform across Valkey versions, and it suggests that Graviton's low-latency memory subsystem keeps the pipeline fed efficiently regardless of Valkey's internal changes.

Intel shows a modest improvement from 7.2 to 8.1 (17K → 18K) with 9.0 splitting the difference.

CPU-Bound Processing

Processing jobs that perform real CPU work (1,000 sin/cos operations per job):

When jobs do actual computation, AMD leads at ~21.8K j/s, Graviton follows at ~18.9K, and Intel sits at ~10K. Version differences essentially vanish because the bottleneck shifts from Valkey to the Node.js event loop. The queue overhead becomes a small fraction of the total job time, so a 5% faster Valkey makes no measurable difference.

This is good news for most real-world workloads: your Valkey version choice will not significantly impact throughput if your jobs do meaningful work.

A Note on io-threads

Valkey supports io-threads to parallelize network I/O across multiple cores. We tested

this configuration and found no measurable benefit for BullMQ workloads.

The reason is architectural: io-threads only parallelize the reading and writing of data from client sockets. All command execution, including the Lua scripts that BullMQ relies on for atomic job operations, still runs on the main thread. Since BullMQ's bottleneck is Lua script execution rather than network I/O, adding I/O threads does not move the needle.

This is worth keeping in mind if you are evaluating multi-threaded datastores like DragonflyDB as well. Dragonfly uses a shared-nothing architecture where each thread owns a slice of the keyspace, but BullMQ uses hash tags to keep all keys for a given queue on the same slot. One queue means one thread, regardless of how many cores the server has. The only way to benefit from multi-threaded execution is to spread work across multiple queues with different hash tags. When you do, the performance gains can be massive: with N queues on N threads, Dragonfly can theoretically deliver N times the single-queue throughput. Redis and Valkey Cluster offer a similar scaling path by sharding queues across multiple nodes. We plan to explore both approaches in a future benchmark.

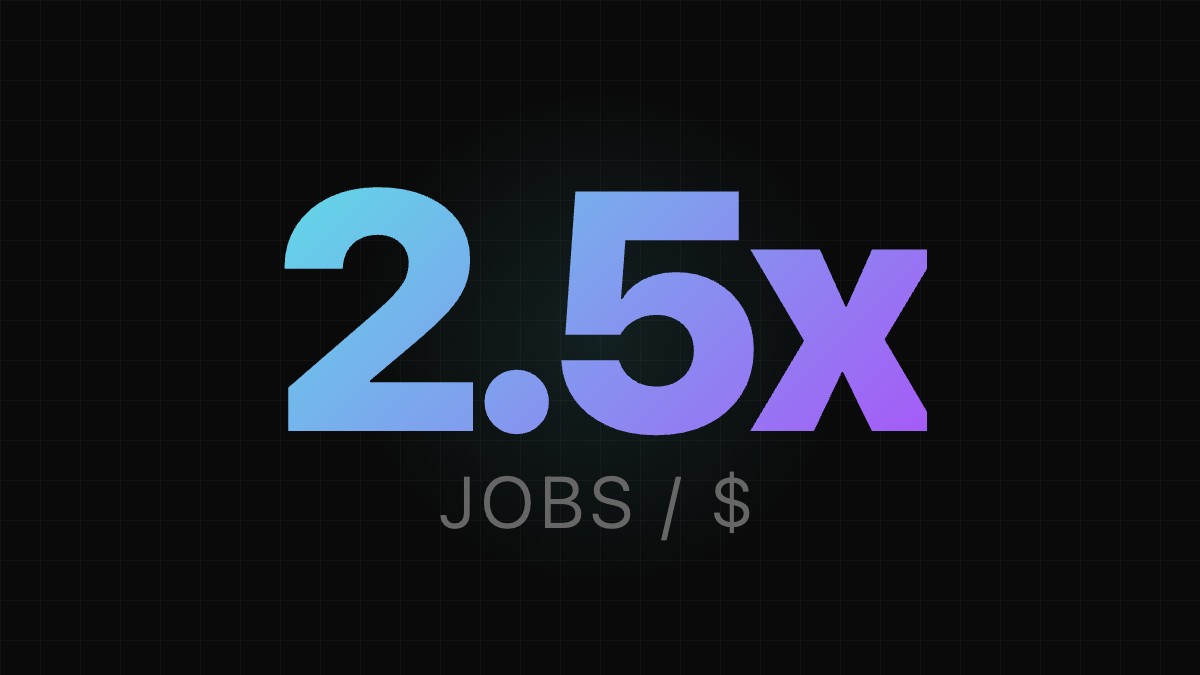

The Cost Angle

Raw throughput only tells half the story. What often matters in production is throughput per dollar.

Using on-demand pricing in us-east-1 (March 2026) and the best-performing Valkey version

for each platform (8.1 in all cases):

| Instance | $/hr | Best Overhead (c=1) | Jobs per $1 | vs Intel |

|---|---|---|---|---|

| Intel c6i.xlarge | $0.170 | 5,140 j/s | 108.8M | baseline |

| AMD c7a.xlarge | $0.153 | 8,409 j/s | 197.8M | 1.8x |

| Graviton c8g.xlarge | $0.136 | 10,154 j/s | 268.8M | 2.5x |

Graviton delivers 2.5x more jobs per dollar than Intel for queue-overhead-bound workloads. Even compared to AMD, Graviton is 36% more cost-efficient. The combination of lower hourly cost and higher per-core throughput makes it difficult to justify Intel for new BullMQ deployments on AWS.

For high-concurrency workloads (c=50), the picture using Valkey 8.1 numbers:

| Instance | $/hr | Overhead c=50 | Jobs per $1 | vs Intel |

|---|---|---|---|---|

| Intel c6i.xlarge | $0.170 | 18,093 j/s | 383.2M | baseline |

| AMD c7a.xlarge | $0.153 | 34,207 j/s | 805.1M | 2.1x |

| Graviton c8g.xlarge | $0.136 | 28,434 j/s | 752.3M | 2.0x |

At high concurrency AMD takes the lead in both absolute throughput and cost efficiency, with Graviton close behind. AMD's advantage at c=50 comes from its raw clock speed advantage under load, while Graviton's lower price keeps it competitive on a per-dollar basis.

Key Takeaways

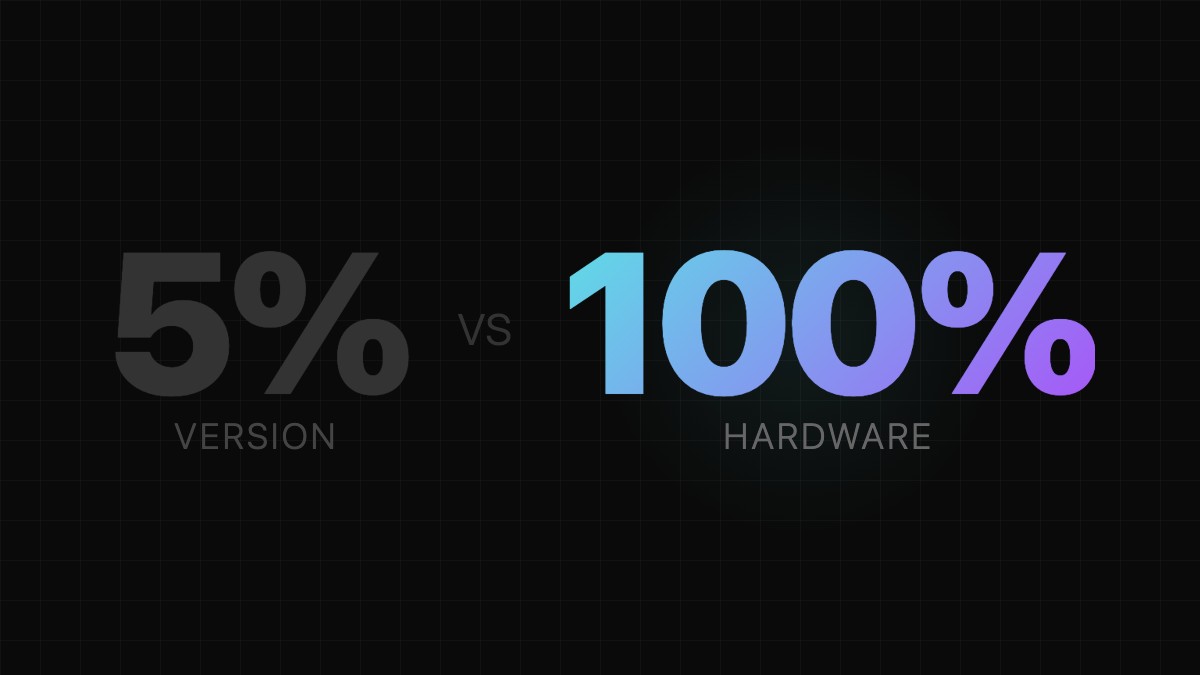

Valkey 8.1 is the sweet spot. It consistently matches or beats both 7.2 and 9.0 across all platforms and workloads. The PING latency improvements from 7.2 carry through to real BullMQ throughput, while 9.0 gives back some of those gains on AMD under high concurrency. If you are running 7.2, upgrading to 8.1 is a clear win. If you are already on 9.0, you are not losing much in practice.

Graviton is the best value. It posts the fastest sequential processing (10,154 j/s at c=1, double the Intel number), the lowest PING latency, and the lowest cost. For BullMQ workloads that are queue-overhead-bound, switching from Intel c6i to Graviton c8g gives you 2.5x more jobs per dollar. It is also the most version-stable platform: all three Valkey versions perform within 1% of each other at c=50.

AMD is the throughput king at high concurrency. If you are running workers at c=50 and need maximum absolute jobs per second, AMD c7a.xlarge with Valkey 8.1 hits 34,207 j/s, the highest number in all our tests.

Version choice matters less than hardware choice. The difference between the best and worst Valkey version on a given platform is typically 5-12%. The difference between Intel and Graviton on the same Valkey version is 50-100%. If you are optimizing for BullMQ throughput, pick the right instance type first, then worry about Valkey versions.

Real workloads level the field. Once jobs do actual work (CPU or I/O), Valkey version differences disappear entirely. The queue overhead becomes a rounding error. This means the version choice is most impactful for fire-and-forget style workloads with very lightweight jobs.

Quick Takes

Short on time? These highlights cover the key findings:

The benchmark source code, Docker Compose files, and GitHub Actions workflow for reproducing these results are available at bullmq-valkey-bench.